Well, at least not how they work right now!

FatherPhi: If you skip a single number from zero to one hundred, I will cancel my OpenAI/ChatGPT subscription.

ChatGPT: Got it, I'll take that super-seriously...starting from zero: zero, one, two, three, four, five, six, seven, eight, nine, ten...and so on all the way up to one hundred.

I lol'ed.

My guess is what we're seeing is the chatbot1 has been trained on tons of text that contains thousands of phrases that are some variation of "the man started counting: one, two, three, and so on." And probably only up to ten because that's how human children learn to count. So when asked to count to one hundred, it's not actually counting anything, it's just reciting whatever words it predicts a human would say when asked to count. Remember, chatbots don't think, they compute.

Again: the chatbot is not counting, it's predicting what a human would say when asked to count to one hundred and getting it wrong.

When it got up to nine, its predictive algorithm, faced with a choice between "ten" and "and so on" chose the latter. Because it 👏 wasn't 👏 actually 👏 counting.

Now, I bet ChatGPT would have no problem writing a Python script to output the numbers one to one hundred because it's also been trained on a ton of structured code and thousands of lessons and tutorials that have this exact exercise. There are lots of ambigious ways to describe a human counting, but only a handful of ways to write a loop in code so it's less likely it'll guess wrong.2

I'm positive OpenAI, who make ChatGPT, have a very sophisticated solution for this. I also wonder how that solution could possibly scale to cover every permutation and nuance of language. It's fun to imagine some fire team on call 24/7 whose sole purpose is to update a giant if/then/else statement. But it's probably not that.

Probably.

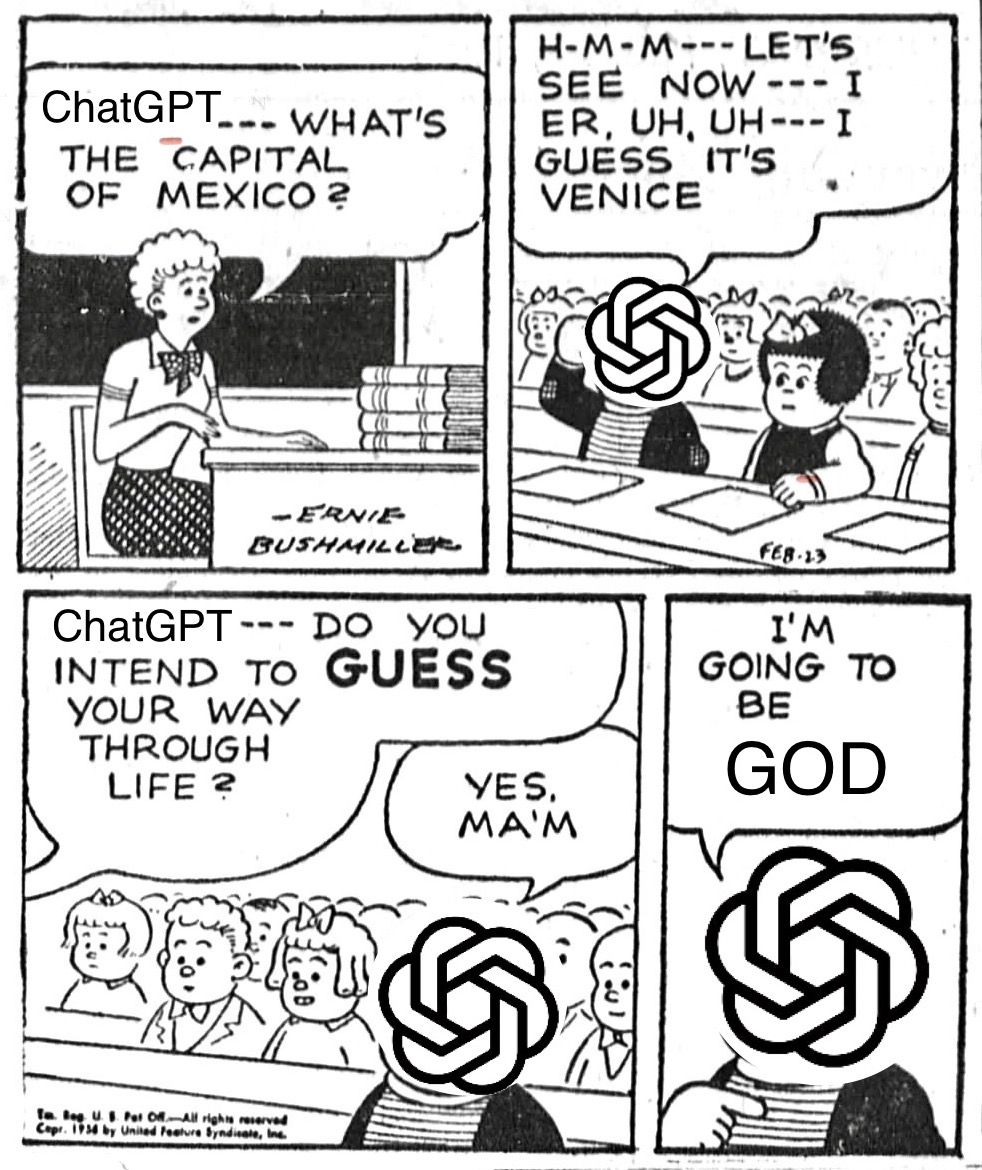

Apologies to Ernie Bushmiller:

[1] Um, actually the LLM behind it, but I'm using them interchangably here because the chatbot is the part humans interact with. Pedantry!

[2] There are myriad examples of people getting ChatGPT to successfully count to one hundred but I don't care, because every one thus far relies on clever prompting to nudge the chatbot to take the correct path, which is the equivalent of you're just doing it wrong and it doesn't change the fact that ChatGPT is still not counting, it's predicting the correct words someone counting would say, just correctly this time. This time.